Why Voice Activity Detection Datasets Matter

Enabling Real-Time Speech Recognition and Streaming

Voice activity detection determines when speech begins and ends, allowing speech recognition systems to process only the relevant portions of audio. Research from the Speech and Audio Interaction Lab at Columbia University highlights that accurate VAD is essential to reduce latency and computation in real-time ASR systems. Good VAD datasets help models distinguish speech patterns even in challenging environments.

Supporting Telecommunication and VoIP Systems

Voice and video communication platforms depend on VAD to optimize bandwidth, activate noise suppression, and manage automatic gain control. High-quality datasets help systems correctly detect speech transitions, enabling clearer and more stable communication on low-bandwidth connections.

Improving Embedded and Low-Power Voice Interfaces

Mobile assistants, smart devices, and IoT sensors use lightweight VAD models to determine when to listen actively. These models rely on datasets with short segments, noisy variations, and device diversity to maintain accuracy in constrained hardware environments.

Core Components of Voice Activity Detection Datasets

Speech and Non-Speech Segment Labels

Datasets include labeled segments identifying when speech is present and when it is absent. These segments capture natural transitions between speech and silence, background noise, and human activity. Segment-level labels enable models to recognize speech onset and offset accurately.

Acoustic Diversity Across Environments

VAD datasets include a wide variety of recording environments such as homes, offices, vehicles, industrial facilities, and public spaces. Environmental diversity ensures that models do not overfit to quiet or predictable conditions.

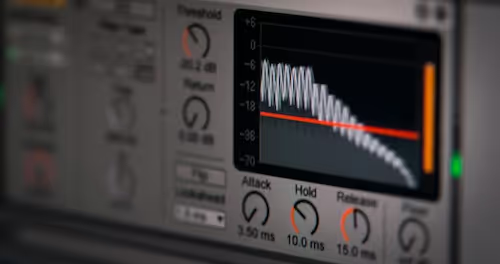

Temporal Annotations for Start and End Points

Precise temporal boundaries help models learn the exact moment speech begins and ends. These annotations are critical for streaming applications, where small timing errors can lead to cut-off words or keywords or unnecessary processing of silence.

Variability That Strengthens VAD Models

Speech Rate, Accent, and Speaker Characteristics

Speech varies significantly across speakers. Fast or slow speech, regional accents, and differences in pitch or timbre all affect how speech appears in acoustic form. Including diverse speakers improves the model’s ability to detect speech across demographics.

Noise, Overlapping Speech, and Reverberation

In real-world environments, speech often occurs alongside background noise or reverberation. Research from the Acoustical Society of Japan shows that overlapping events and room acoustics significantly affect VAD performance. Including these conditions helps models remain robust under difficult acoustic scenarios.

Device and Microphone Variability

Recording hardware affects how speech and non-speech signals are captured. Using samples recorded with smartphones, laptops, IoT microphones, and professional equipment ensures VAD performance remains consistent across devices.

Techniques Used to Build Voice Activity Detection Datasets

Multi-Environment Field Recording

Teams collect audio samples in diverse settings, capturing both speech and environmental sounds across daily activities. Field recording ensures natural acoustic variability and supports generalization across real-world scenarios.

Long-Form Recording and Segmentation

VAD datasets often begin with long continuous audio recordings. Annotators later break these recordings into smaller speech and non-speech segments. This process preserves natural transition points and reduces annotation ambiguity.

Synthetic Noise and Augmentation

To enhance robustness, dataset creators add synthetic noise such as traffic, machinery, or crowd chatter. Augmentation techniques help models adapt to environments that may be difficult to capture consistently in the field.

Annotation and Quality Assurance for VAD Data

Frame-Level or Segment-Level Verification

Annotators manually verify timestamps for speech onset and offset. Accurate boundary detection is essential for real-time applications. Annotation tools allow frame-level inspection to minimize timing errors.

Multi-Annotator Consistency Checks

Because speech boundaries can be subjective, especially in multilingual context, multiple annotators review samples independently. Discrepancies are resolved through consensus or secondary review. This ensures consistency across large datasets.

Noise-Type Classification and Metadata Validation

Annotators classify background noise types and verify metadata such as recording location, device specifications, and acoustic conditions. Accurate metadata supports downstream noise modeling and system optimization.

Applications Enabled by Voice Activity Detection Datasets

Speech Recognition and Transcription Pipelines

VAD enables ASR systems to focus on speech segments and ignore silence or irrelevant noise. This improves transcription accuracy and reduces computational load.

Telecommunications and Conferencing

VAD improves audio quality in conferencing platforms by activating noise suppression and echo cancellation only when speech is detected. Clear segmentation enhances user experience.

Embedded and Real-Time Voice Interfaces

IoT devices, smart assistants, and mobile apps rely on VAD to detect when users are speaking. Accurate detection reduces power consumption and ensures responsive interaction.

Supporting VAD Dataset Development

Voice activity detection datasets are essential for real-time voice interfaces, telecommunication systems, and speech recognition pipelines. Their effectiveness depends on diverse acoustic conditions, precise temporal annotation, and thorough quality assurance. If your team needs help building, annotating, or validating VAD datasets, we can explore how DataVLab supports robust audio dataset development for advanced speech technologies.