3D Annotation Services for LiDAR and Point Cloud Data

3D Annotation Services

DataVLab provides 3D annotation services for LiDAR, point clouds, depth maps, and multimodal sensor data used in autonomous driving, robotics, industrial automation, and geospatial AI. We deliver high-accuracy 3D bounding boxes, cuboids, point cloud segmentation, drivable area labeling, and temporal object tracking with multi-stage QA, calibration checks, and project-specific guidelines. Whether you need a pilot dataset or large-scale production labeling, our team supports consistent, scalable workflows for advanced perception models.

High-accuracy 3D bounding boxes, cuboids, and point cloud segmentation for perception AI.

Specialized 3D labeling workflows for LiDAR, depth maps, and multimodal sensor fusion.

Geometric, temporal, and class-level QA for reliable 3D annotation at scale.

3D annotation is the process of labeling LiDAR, point cloud, and depth data so computer vision and perception models can detect, segment, localize, and track objects in 3D space. It is a core requirement for autonomous driving, robotics, mapping, and smart infrastructure systems. DataVLab provides 3D data annotation services delivered by trained specialists who understand point cloud geometry, occlusion handling, sensor noise, and multimodal alignment.

We annotate 3D bounding boxes and cuboids, point cloud semantic and instance segmentation, ground plane labels, drivable areas, lane-related surfaces, and temporal object tracks across sequences. Our teams follow geometric rules for rotation, orientation, center alignment, and scale while handling occlusions, sparse regions, partial captures, and varying point densities. We also support multimodal workflows that align LiDAR with camera and radar data.

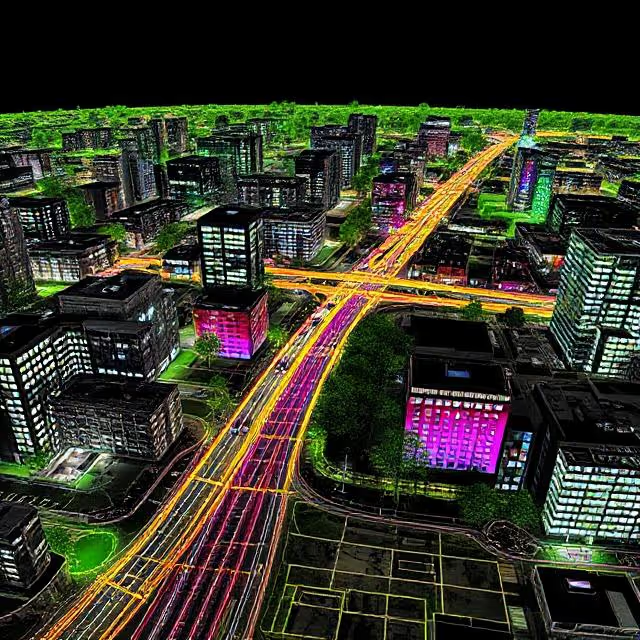

Our 3D annotation services support autonomous vehicles, ADAS, delivery robots, warehouse automation, mobile mapping systems, smart city analytics, industrial inspection, construction monitoring, and geospatial intelligence. We work with LiDAR point clouds, depth maps, and sensor-fusion datasets, and can adapt class ontologies and labeling rules to your model requirements.

3D annotation quality depends on geometric precision and consistent review. Our workflows include calibration checks, task sampling, multi-step QA, class audits, overlap checks, and targeted review of difficult frames to improve consistency across annotators and sequences.

For sensitive projects, DataVLab supports secure delivery workflows, including GDPR-aligned processing and EU-only annotation options where required.

3D Annotation Capabilities for LiDAR and Point Cloud Projects

From cuboids and segmentation to tracking and sensor fusion, DataVLab supports 3D perception teams with trained annotators, project-specific guidelines, and structured QA workflows.

3D Bounding Boxes and Cuboids

Geometrically accurate cuboids for 3D object detection

We annotate vehicles, pedestrians, cyclists, machinery, obstacles, and infrastructure with 3D bounding boxes and cuboids that follow project rules for rotation, dimensions, center alignment, and tightness.

Point Cloud Segmentation

Semantic and instance segmentation for sparse and dense point clouds

We segment roads, vegetation, buildings, warehouse assets, industrial equipment, and custom classes with depth-aware labeling and consistent class boundaries for training and validation datasets.

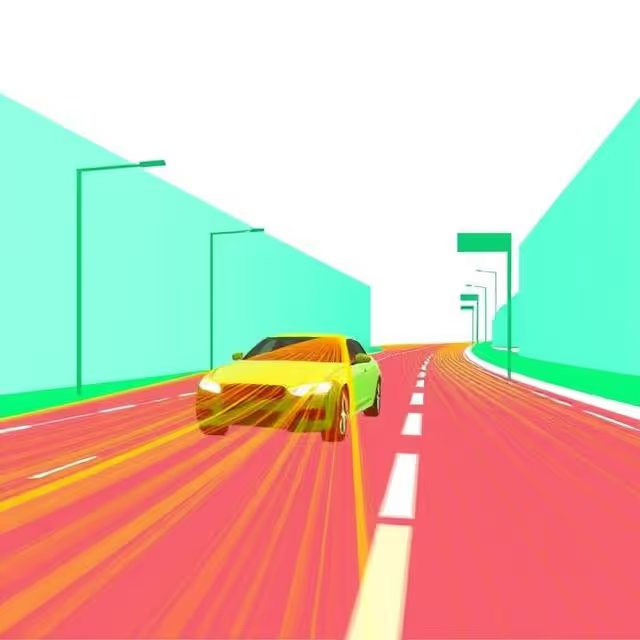

Drivable Area and Ground Plane Annotation

Surface and navigable-space labels for planning and mobility models

We annotate drivable zones, walkable regions, curbs, ramps, lane-adjacent surfaces, and ground planes to support autonomous mobility, delivery robotics, and navigation systems.

3D Tracking Across Temporal Sequences

Consistent object identities across time and motion

We label and review object tracks across sequential frames to maintain identity continuity, reduce drift, and improve temporal consistency in dynamic scenes with occlusion and motion blur.

Multimodal Sensor Fusion Annotation

Aligned labels across LiDAR, radar, and camera streams

We annotate synchronized multimodal datasets by aligning image and 3D labels according to calibration rules, sensor timing, and ontology guidelines for robust perception model training.

3D Annotation Quality Control

Structured QA for geometric precision and label consistency

Our QA process includes class audits, cuboid tightness checks, rotation and orientation review, overlap validation, sequence-level consistency checks, and targeted corrections for difficult frames.

Discover How Our Process Works

Defining Project

Sampling & Calibration

Annotation

Review & Assurance

Delivery

Explore Industry Applications

We provide solutions to different industries, ensuring high-quality annotations tailored to your specific needs.

We provide high-quality annotation services to improve your AI's performances

Annotation & Labeling for AI

Unlock the full potential of your AI application with our expert data labeling tech. We ensure high-quality annotations that accelerate your project timelines.

3D Cuboid Annotation Services

High precision 3D cuboid annotation for LiDAR, depth sensors, stereo vision, and multimodal perception systems.

LiDAR Annotation Services

High accuracy LiDAR annotation for 3D perception, autonomous driving, mapping, and sensor fusion applications.

Sensor Fusion Annotation Services

Accurate annotation across LiDAR, camera, radar, and multimodal sensor streams to support fused perception and holistic scene understanding.

ADAS and Autonomous Driving Annotation Services

High accuracy annotation for autonomous driving, ADAS perception models, vehicle safety systems, and multimodal sensor datasets.

Robotics Data Annotation Services

High precision annotation for robot perception models, including navigation, object interaction, SLAM, depth sensing, grasping, and 3D scene understanding.

FAQs

Here are some common questions we receive from our clients to assist you.

3D annotation adds spatial labels to three-dimensional data, typically LiDAR point clouds, multi-camera setups producing depth information, or 3D mesh representations. It includes 3D bounding boxes (cuboids defined by position, dimensions, and orientation in 3D space), point cloud segmentation (assigning class labels to individual points), and 3D polylines or planes for road markings and surfaces. 3D annotation is essential for autonomous driving, robotics, and any application where a system must understand the physical geometry of its environment rather than just its 2D appearance.

3D cuboid annotation places a precisely oriented rectangular cuboid around each object in a 3D point cloud, capturing the object's center position, dimensions (length, width, height), and yaw angle. Unlike 2D bounding boxes, 3D cuboids describe objects in physical space with real-world dimensions, allowing downstream systems to calculate exact distances, sizes, and trajectories. This is essential for autonomous vehicles (knowing a pedestrian is 1.2 meters wide at 15 meters distance) and robotics (knowing a box is 30cm tall to plan a grasp). The annotation challenge is that objects in point clouds are sparse, occluded, and must be labeled from bird's-eye, front, and side views simultaneously.

3D annotation is significantly more complex than 2D image annotation. Annotators must work in three simultaneous views (bird's eye, front, side), place cuboids with precise position and orientation, handle occlusions where objects are only partially represented by points, and maintain consistent object tracking across frames in sequential data. Annotation speed is typically 10 to 30 cuboids per hour for LiDAR data, compared to hundreds of bounding boxes per hour for 2D images. Annotation tools matter significantly: 3D annotation platforms with auto-fitting, ground plane detection, and copy-propagation across frames substantially reduce annotation time.

Common 3D annotation formats include KITTI (popular in autonomous driving research, stores cuboids as text files with type, dimensions, and location), nuScenes JSON (for multi-sensor autonomous driving datasets), PCD with custom label files (for point cloud segmentation), and custom JSON or binary formats for proprietary training pipelines. For fusion applications combining LiDAR with cameras, annotations must include calibration data so that 3D labels can be projected onto 2D images and vice versa. DataVLab delivers 3D annotation datasets in your required format with validated coordinate systems and sensor calibration compatibility.

3D annotation is primarily used in autonomous driving (vehicles, pedestrians, cyclists, road furniture in LiDAR point clouds), mobile robotics and warehouse automation (object detection and mapping for navigation and manipulation), construction site monitoring (tracking equipment and personnel in 3D space), precision agriculture (plant and crop structure analysis from drone LiDAR), and industrial inspection (3D measurement and defect detection from structured light or photogrammetry). Any application requiring spatial understanding beyond what 2D cameras can provide is a candidate for 3D annotation.

3D annotation quality depends on cuboid fit accuracy (the cuboid should tightly enclose all points belonging to the object), orientation accuracy (the yaw angle must match the object's actual heading direction), class accuracy (correct class assignment including distinguishing similar object types like truck vs. van), and completeness (all instances must be labeled even when point density is low). For sequential LiDAR data, tracking consistency is an additional quality dimension: each object must maintain a consistent ID across frames and the same physical object must not be labeled as multiple different tracks. DataVLab implements automated checks for empty cuboids, overlapping cuboids, and tracking ID consistency alongside human quality review.

Custom service offering

Up to 10x Faster

Accelerate your AI training with high-speed annotation workflows that outperform traditional processes.

AI-Assisted

Seamless integration of manual expertise and automated precision for superior annotation quality.

Advanced QA

Tailor-made quality control protocols to ensure error-free annotations on a per-project basis.

Highly-specialized

Work with industry-trained annotators who bring domain-specific knowledge to every dataset.

Ethical Outsourcing

Fair working conditions and transparent processes to ensure responsible and high-quality data labeling.

Proven Expertise

A track record of success across multiple industries, delivering reliable and effective AI training data.

Scalable Solutions

Tailored workflows designed to scale with your project’s needs, from small datasets to enterprise-level AI models.

Global Team

A worldwide network of skilled annotators and AI specialists dedicated to precision and excellence.

Potential Today

Blog & Resources

Explore our latest articles and insights on Data Annotation

We are here to assist in providing high-quality data annotation services and improve your AI's performances