Organ, Tissue and Tumor Segmentation

Organ, tissue and tumor segmentation form the backbone of many advanced medical imaging applications. Accurate delineation of anatomical structures is essential for AI systems that must operate safely in clinical workflows. When segmentation is inconsistent, AI becomes unstable. When segmentation is precise, AI behaves more like a reliable assistant for radiologists, oncologists, and surgeons. In this article, you will gain a clear, clinically accurate, and technically grounded understanding of how organ segmentation works, why it is so difficult, and what best practices are required to make these models robust in the real world.

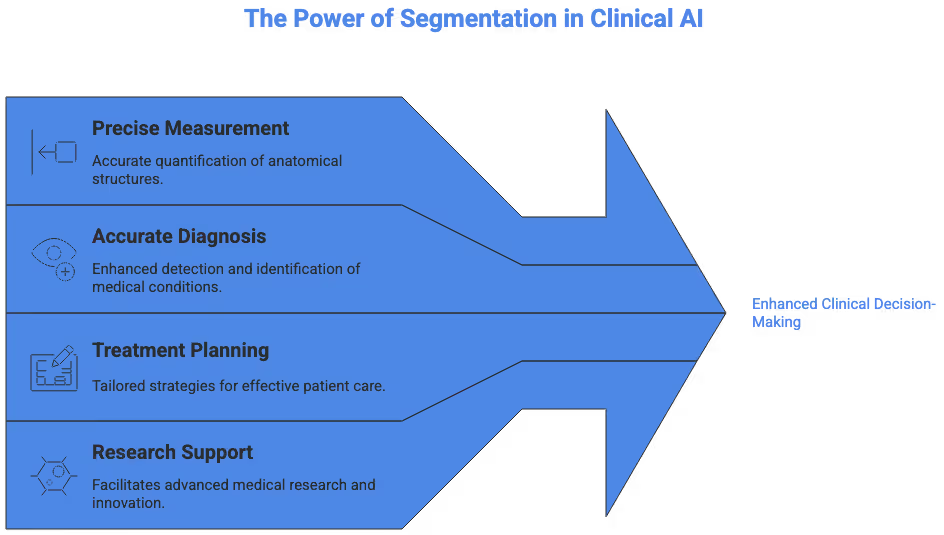

The Role of Segmentation in Modern Medical AI

Segmentation is more than a preprocessing step. It defines the structures that downstream tasks depend on, influencing everything from automated measurements to cancer staging. Clinicians increasingly rely on AI systems that extract volumes, shapes, boundaries, or internal tissue patterns, and those systems only perform well when segmentation datasets are built correctly.

Segmentation enables quantitative imaging, where tumors can be measured precisely over time, organs can be evaluated for structural changes, and treatment response can be assessed more objectively. The shift toward quantitative radiology is visible across leading medical centers and research institutions such as Johns Hopkins Medicine, which publishes extensive resources on cancer biology and imaging patterns.

How Organ, Tissue and Tumor Segmentation Works

Segmentation draws precise boundaries around structures of interest. These boundaries can describe whole organs, sub-regions, tissue layers, or pathological entities like masses and lesions. Although the concept seems straightforward, segmentation is clinically and technically complex. Different modalities show structures with varying clarity. An organ on MRI looks different from CT, and tumors vary dramatically even within the same organ system.

Segmentation must therefore combine radiologic knowledge, machine learning techniques, and annotation standards that ensure the model sees the anatomy the same way experts do.

Clinical and Technical Challenges in Segmentation

Segmentation performance is influenced by variability in patient anatomy, imaging protocols, and disease presentation. These challenges often require expert annotators and reviewers to coordinate with radiologists to verify boundaries. Imaging protocols differ between hospitals. Slice thickness, contrast timing, field-of-view, and patient positioning create multiple failure modes for inexperienced models.

Understanding these challenges helps guide dataset preparation, annotation strategy, and model configuration.

Organ Segmentation Challenges Across Modalities

Organ segmentation appears simple for large structures like the liver, lungs, or brain, yet the real-world complexity is significant. Imaging noise, partial volume effects, inter-patient size variability, congenital anomalies, and surgical changes can all impact accuracy.

MRI-based organ segmentation is particularly challenging because MRI sequences differ in contrast properties. T1-weighted, T2-weighted, diffusion-weighted, and post-contrast sequences each highlight different tissues. Institutions such as Mayo Clinic offer detailed explanations of MRI modalities that help contextualize how imaging parameters influence segmentation difficulty.

These modality differences make consistent organ segmentation a difficult but essential requirement for developing trustworthy AI.

Tissue Segmentation and Microstructural Complexity

Tissue segmentation requires identifying layers or microregions within a structure. In brain imaging, this could mean separating gray matter, white matter, and cerebrospinal fluid. In musculoskeletal imaging, tissue segmentation might involve distinguishing tendons from muscle or fat. In pathology whole-slide images, tissue segmentation identifies epithelium, stroma, necrotic regions, or immune clusters.

Algorithms must recognize subtle differences in texture, density, and intensity. Tissue appearance can change under different imaging protocols or scanning conditions, so segmentation methods require careful calibration. The inclusion of tissue segmentation in AI datasets ensures models capture clinical nuance rather than overfitting to limited patterns.

Understanding Tumor Segmentation and Oncologic Variability

Tumor segmentation is one of the most important and difficult tasks in medical imaging AI. Tumors vary in size, shape, margin clarity, internal heterogeneity, and enhancement patterns. Even within a single organ system, tumors can appear vastly different from patient to patient. Tumors may infiltrate surrounding tissues, cross anatomical boundaries, or present with edema, necrosis, calcification, or hemorrhage. These variations make image segmentation extremely challenging.

Oncology departments emphasize that accurate boundaries directly influence staging, treatment decisions, and prognosis. For example, the detailed clinical guides published by Stanford Medicine on brain tumors provide valuable context on tumor morphology and imaging patterns.

AI systems trained for tumor segmentation must therefore integrate radiologic nuance and account for complex patterns that go far beyond simple intensity differences.

Brain Tumor Segmentation

Brain tumor segmentation requires precise delineation of tumor core, edema, and enhancing regions. MRI sequences play complementary roles: FLAIR highlights edema, T1 post-contrast highlights active tumor, and T2 shows broader tissue changes. Deep learning systems must fuse these modalities to produce clinically meaningful outputs. Incorrect boundaries can alter volumetric measurements and impact treatment planning.

Brain anatomy is highly variable. Tumor mass effect can distort normal structures, pushing midline or compressing ventricles. Segmentation models must learn to differentiate pathological tissue from normal variants. High-quality datasets often include expert-reviewed masks and multiple MRI sequences per patient.

Research institutions frequently develop computational models aimed at handling this complexity. The clinical insights provided by Stanford Medicine serve as an excellent anchor for understanding brain tumor morphology and imaging interpretation.

Liver Tumor Segmentation

Liver tumor segmentation is notoriously difficult due to the liver’s complex vascular network and the variability of lesion appearances. Hepatocellular carcinoma, metastases, cysts, and benign lesions all present differently. Contrast timing during CT or MRI dramatically influences lesion visibility. Without consistent timing, lesions may appear faint or nearly invisible.

Institutions such as Massachusetts General Hospital provide comprehensive clinical guidance on liver cancer, offering valuable context on lesion presentation and imaging characteristics.

The well-known liver tumor segmentation challenge has further highlighted sources of variability. Deep models must handle heterogeneous appearances, irregular shapes, and lesion multiplicity. Incorporating diverse imaging protocols is crucial for developing robust solutions for liver tumor segmentation at scale.

Lung Tumor Segmentation

Lung tumor segmentation requires navigating unique complexities. Lung tissue has high air content, creating large intensity differences from soft tissues. Tumors may appear as solid nodules, part-solid nodules, or ground-glass opacities. Inflammatory processes and infections can mimic tumor patterns, increasing false positives.

The American Lung Association provides essential clinical guidance on lung cancer detection and imaging.

Deep learning models trained for lung tumor segmentation must be capable of handling nodules of different densities, margins, and anatomical positions such as near the pleura or vascular structures.

Integrating Clinical Expertise into Segmentation Workflows

Segmentation requires collaborative work between annotators and clinical reviewers. Radiologists often verify boundaries to ensure anatomical accuracy, while quality control reviewers ensure consistency across the dataset. This is especially important for longitudinal studies where tumor boundaries may shift over time because of treatment effects.

Segmentation workflows may incorporate multi-reader consensus, where several experts annotate the same region and discrepancies are resolved through discussion. This ensures high reliability and improves model performance during evaluation.

In large-scale AI development, clinical oversight becomes essential. Without expert validation, segmentation masks may contain subtle errors that propagate through the entire model pipeline.

Machine Learning Methods Used for Segmentation

Machine learning models for segmentation are built with architectures such as U-Net, DeepLab, nnU-Net, and transformer-based designs. These models learn spatial hierarchies and capture fine-grained patterns that differentiate tissues. Training requires large datasets with high-quality annotations. If annotations contain noise or subjective variation, models will replicate those inaccuracies.

Modern segmentation pipelines also incorporate pre-processing steps such as intensity normalization, bias field correction, or resolution standardization. These steps ensure the model receives data in a consistent format even if scans originate from different hospitals.

Handling Clinical Variability in Segmentation Datasets

Dataset diversity is essential. If a segmentation model is trained only on a narrow set of cases, it will fail in real-world deployment. Clinical variability includes patient demographics, comorbidities, disease severity, and imaging differences. Segmentation models must handle metal implants, motion artifacts, atypical anatomies, and post-surgical appearances.

This is particularly important for multi-organ segmentation where anatomical structures overlap or change during disease progression. Ensuring robust generalization requires careful curation of training and testing datasets.

Validation and Benchmarking

Segmentation performance is measured using metrics such as Dice score, Hausdorff distance, and surface distance. These metrics evaluate how well predicted boundaries match expert annotations. External validation on independent datasets is crucial. Many research institutions share anonymized imaging datasets to promote standardized evaluation.

Clinical benchmarking goes beyond metrics. Radiologists evaluate segmentation outputs in context, assessing whether boundaries make sense given the anatomy. A model with a high Dice score may still fail to capture clinically relevant regions.

Institutions like UCSF Radiology publish resources explaining imaging protocols and clinical interpretation that help contextualize benchmarking efforts.

Real-World Deployment Considerations

Implementing segmentation models in hospitals requires consideration of workflow integration, latency, compute resources, and compliance with regulatory standards. Clinical environments require models that produce stable outputs and integrate into PACS, radiology viewers, or treatment planning systems.

Quality and reliability are paramount. The model must perform consistently across scanners and protocols. Hospitals may also require visual explanations or overlays to verify segmentation accuracy before using the output for decisions.

Improving Model Robustness Through Annotation Strategy

Annotation strategy directly influences model performance. Clear guidelines ensure that annotators interpret boundaries consistently. Using small pilot batches to refine instructions helps prevent early mistakes from propagating. Incorporating radiologist feedback into instructions improves anatomical accuracy.

Segmentation guidelines may include rules for ambiguous regions, boundaries that are difficult to see, or cases with artifacts. Documenting these rules helps standardize annotations across large teams.

Ethical and Clinical Reliability Considerations

Segmentation is not only a technical challenge but also an ethical responsibility. AI used in diagnosis or treatment planning carries clinical implications. Errors in segmentation can lead to incorrect measurements, misleading analyses, or poor treatment decisions. Datasets must be built with careful oversight to mitigate risk.

In radiology and oncology, segmentation quality can influence patient outcomes. Ensuring clinical reliability is fundamental to deploying safe medical AI systems.

If You Are Working on an Imaging Project, reachout to us at DataVLab

If you are working on an AI or medical imaging project that involves organ, tissue, or tumor segmentation, our team at DataVLab would be glad to support you. We can help build expert-validated segmentation datasets at clinical quality, support complex annotation pipelines, and provide guidance on dataset preparation for high-performance medical AI systems.