Why Pose and Gaze Estimation Datasets Matter

Understanding Human Orientation Through Head Pose

Head pose estimation datasets include images and video sequences labeled with yaw, pitch, and roll angles that describe how a person's head is oriented in three-dimensional space. These datasets help models understand body language, driver attentiveness, classroom engagement, and human intent. Cambridge University’s Computer Vision Group provides insights into how head pose modeling underpins many human-behavior applications. Without accurate pose datasets, models fail to infer orientation when faces are partially turned or captured from non-frontal angles.

Inferring Human Attention Through Gaze Direction

Gaze estimation datasets teach models how to determine where a person is looking. These datasets include markers for pupil location, gaze vectors, and sometimes 3D fixation points. Gaze research from the Max Planck Institute shows how attention inference depends heavily on fine-grained eye-movement patterns. Gaze estimation is essential for automotive driver monitoring, retail analytics, UX testing, medical diagnostics, and safety systems.

Capturing Eye Movement Dynamics Through Eye Tracking

Eye tracking datasets contain highly detailed maps of how eyes move across frames. They often include frame-level pupil annotations, eye landmarks, blink information, and fixation sequences. MIT’s Eye Tracking Lab highlights how eye motion patterns reveal cognitive load, attention shifts, and subconscious behavioral signals. These datasets power research into cognition, AR/VR interfaces, human-computer interaction, and navigation behavior.

Core Components of High-Quality Pose & Gaze Datasets

Precise Angle and Vector Annotations

Head pose datasets require exact yaw, pitch, and roll measurements, while gaze datasets rely on 2D or 3D gaze vectors that indicate fixation points. These labels must be extremely precise because even small errors distort the training process. Consistency in annotation standards ensures that models learn stable geometric interpretations rather than noisy approximations.

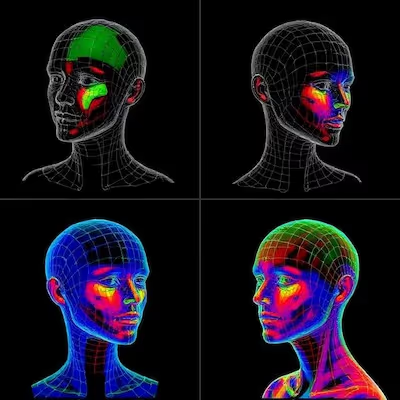

High-Resolution Eye and Facial Detail

Gaze and eye tracking tasks demand imagery where the eyes, eyelids, and surrounding regions are clearly visible. Small features such as pupil size or subtle eyelid movements must be preserved. Low-resolution images introduce noise that prevents models from detecting micro-movements accurately. Proper camera settings and controlled capture conditions are essential for these datasets.

Multiple Viewpoints and Camera Distances

To generalize across environments, datasets must include faces at different angles and distances. Gaze estimation collected only from frontal views fails when used in environments like retail stores, offices, or cars where camera placement is unpredictable. Multi-view datasets teach models to infer gaze direction regardless of camera orientation.

Sources of Variability That Strengthen These Datasets

Lighting, Shadows, and Reflections

Lighting dramatically affects how eyes and head contours appear. Shadows obscure facial features, while bright reflections can distort pupil detection. Including varied lighting conditions improves model performance across indoor and outdoor environments. Strong datasets intentionally collect samples under backlit, low-light, diffuse, and high-contrast conditions.

Eyewear, Hair, and Partial Occlusions

Eyeglasses introduce reflections that interfere with gaze estimation. Sunglasses hide the eyes entirely. Hair, hats, and scarves also block parts of the face. Pose and gaze datasets must represent these occlusions to avoid brittle behavior. Models trained only on unobstructed faces will fail during everyday use.

Natural Expression and Micro-Movements

Expressions subtly modify eye shape and head geometry. Even small movements such as squinting, eyebrow lifts, or brief glances must be captured. Eye tracking datasets perform best when they include natural, unposed behavior rather than artificial, constrained expressions.

Techniques Used to Build Pose & Gaze Estimation Datasets

Active IR Eye Tracking Systems

Some datasets use infrared-based eye trackers that capture precise corneal reflections and pupil centers. These systems provide highly accurate ground-truth labels that are then paired with RGB images for model training. IR systems reduce noise caused by ambient light and help capture subtle gaze shifts.

Motion Capture for Head Pose Annotation

Head pose datasets sometimes rely on motion-capture systems or 3D head rigs that provide accurate orientation angles. These ground-truth signals are synchronized with video frames to produce precise labels. When calibrated correctly, this method yields extremely reliable pose data.

Multi-Camera Synchronization

Gaze and pose datasets often require multi-camera setups to capture accurate 3D information. Synchronized cameras positioned at different angles allow triangulation of gaze vectors or head orientation. This technique enhances dataset diversity and ensures that models learn from realistic multi-view scenarios.

Annotation and Quality Assurance for Pose & Gaze Datasets

Identity-Independent Annotation

Unlike face identification datasets, pose and gaze datasets do not require identity labels. Instead, they focus on geometric accuracy. Annotators must be trained to label eye landmarks, head angles, and gaze fixations precisely, ensuring that the dataset remains consistent across thousands of frames.

Frame-Level and Sequence-Level QA

Quality assurance checks must verify both per-frame accuracy and temporal continuity. Inconsistencies in gaze direction across adjacent frames can mislead behavioral models. Multi-stage QA ensures that gaze vectors, head angles, and eye landmarks remain coherent over time.

Handling Hard Cases and Edge Conditions

Difficult samples such as extreme head rotations, closed eyes, fast blinking, or partially occluded pupils should not be removed. These edge cases help models generalize to real-world conditions. Annotators must understand how to label them correctly rather than treating them as noise.

Applications Enabled by Pose & Gaze Estimation Datasets

Automotive Driver Monitoring Systems

Carmakers use gaze and head pose datasets to train systems that detect distraction, drowsiness, and lack of awareness. Accurate gaze estimation helps identify unsafe behaviors early. These systems rely heavily on datasets that represent real driving conditions.

Behavioral and Attention Analytics

Retail, education, and workplace analytics use gaze data to understand attention patterns, engagement, and navigation behavior. For example, researchers studying visual focus in classrooms rely on pose and gaze datasets to interpret group behavior.

AR/VR and Human-Computer Interaction

In AR/VR environments, gaze and head pose determine interaction flow. Eye tracking datasets enable hands-free control, foveated rendering, and adaptive interfaces that respond to user attention. These applications require highly precise and low-latency gaze estimation.

Supporting Pose and Gaze Dataset Development

Pose and gaze estimation datasets are essential for AI systems that understand human attention, orientation, and behavior. Their quality depends on precise annotation, diverse capture conditions, controlled environments, and rigorous QA. If your team is building head pose, gaze estimation, or eye tracking models and needs expert dataset creation, annotation, or verification workflows, we can explore how DataVLab supports these specialized biometric AI projects with high-precision data.